Over the past few months, Cyara’s Domain Consulting team has been sharing insights about DevOps/Agile trends we’ve identified while working with our customers. We wrote about the goals of CX leaders and identified a DevOps/Agile maturity scale for organizations. In this post, I’d like to cover a trend we’ve identified around feedback loops in the Agile/DevOps process.

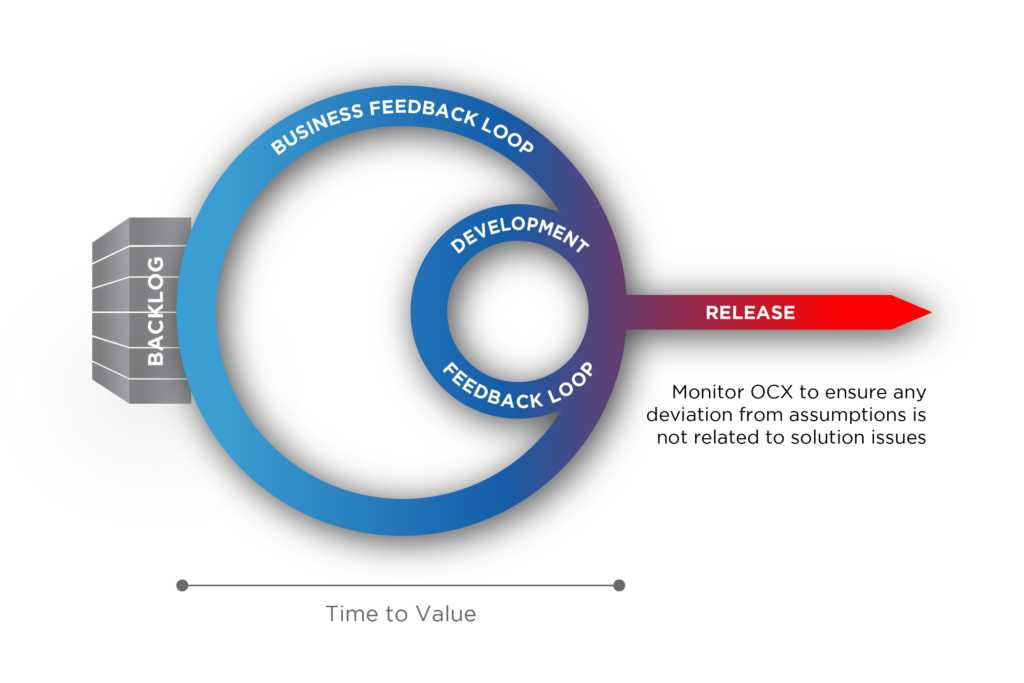

One of the important aspects of DevOps is the feedback loop that it relies on for continuous improvement. In our experience, the DevOps feedback loop should also be supplemented by a business feedback loop.

In the model above, the product or service backlog is on the left-hand side of the diagram with all the features, and on the right-hand side is everything that has been released into production.

The Development Feedback Loop

The first objective of any organization is to achieve the shortest achievable time to value. This objective requires the organization to focus on the following:

- Continuous Improvement: constantly strive to improve the system that delivers value

- Balance: in order to maintain a sustainable cadence, there needs to be balance between delivering incremental value with the product or service, keeping technical debt under control, and looking after the people, processes, and tools that develop the product or service

To be successful in this loop, it’s important to consider several factors. First is batch size, which refers to how many features are included in each release. The ideal number is one, meaning the team should aim for one feature with each release.

An additional consideration in this loop is feature time. This refers to the amount of time spent doing value-adding work as opposed to the total time it takes to go from identifying a feature to releasing it. The goal is to maximize the percentage of time spent on value-adding work, and minimize the amount of time on rework and non-value-adding activities.

And finally, teams need to consider the percentage of code that is accurate and complete. For each stage of the process, teams should be examining instances where work leaves one stage, but later returns to that stage. For example, how many times is a feature released into production successfully without having to roll back. Another example is how many times errors are found in testing. The goal is to minimize rework, which is key to increasing work spent on value-adding features.

The Business Feedback Loop

This business feedback loop is a means to validate assumptions about the business value resulting from new features. When a new feature is conceived of, it’s often difficult to determine with precision the business value it will drive. That said, the organization will have hypotheses, and the business feedback loop can help to validate the assumptions made and refine them.

In this loop, a team should be looking at one metric that matters at any point in time. For instance, imagine a contact center operations team at a bank. One metric that matters for them might be cost to serve. At a more granular level, a way of reducing the cost to serve might be to improve the self-service rate — the percentage of calls that can be completed without having to go to an agent.

The goal (and this goes back to batch size) should be to release features one at a time so you can track the impact of that feature on the metric identified for that release. This specificity allows a a correlation to be made. Teams can baseline the metric before the feature is released, and then track how much that metric trended up or down. When the batch size is large and includes a lot of features in a release, it becomes difficult to know which feature impacted any change in the metrics you are tracking, because it could be a combination of features that actually drove the change. This is why batch size is important in the business feedback loop.

The Importance of Monitoring

In situations where a feature release or change doesn’t have the expected impact, Cyara can help by assessing whether it is due to the feature operating incorrectly. For instance, imagine a team’s goal is to improve their self-service IVR. They may have designed a great way to do that, but the audio quality might be poor. If customers can’t understand what the IVR is saying, they’re not going to use it, despite the great design.

This is where the Pulse monitoring capabilities in the Cyara Platform come into play. The Domain Consulting team is working with many of our customers to identify ways to augment the Pulse Executive Dashboard with other feeds of data, such as Net Promoter Score, or KPIs from contact center solutions like average handle time or transfer rates.

To learn more about how Domain Consulting can help you, get in touch with your Account Executive.