This article was originally published on Botium’s blog on January 12, 2022, prior to Cyara’s acquisition of Botium. Learn more about Cyara + Botium

One major pitfall of building chatbots is underestimating the importance of performance. The UI of a chatbot is usually very simple, so it’s easy to forget the complexity behind these virtual assistants. A slow chatbot might be accepted for home projects, but a company can not neglect it. Bad performance is a serious UX killer.

Performance Testing is the key to ensuring that your chatbot is responsive under high load. The worst case is maybe a chatbot that does not answer at all because it was not able to recover after a high load caused by clients or some DDoS attack.

Stress Testing

To collect some basic knowledge about the performance of your chatbot we suggest starting with a stress test. Stress testing basically means that we are starting with a small number of parallel users that gets gradually increased during the execution. After some minutes it will answer two fundamental questions:

First, the main output is how many parallel users can be served without slowing down the system. Determining which are the slow parts requires of course human interaction.

Second, but no less important output can be detected errors. Usually, it is the first production-near scenario for a chatbot experiencing a heavy load.

Let’s see some more real-life examples…

First Stress Test

By default, all parallel users are having a very simple conversation with the bot, saying just ‘hello’ for example. They wait for an answer and repeat the conversation until the end of the test is reached. Any response coming from the chatbot is accepted. So if the chatbot answers sometimes with ‘Hello User’ and sometimes with ‘I don’t understand’, it has no impact on the performance results. The main goal here is to get an answer, while an HTTP error code or lack of answer is interpreted as a failure of the course.

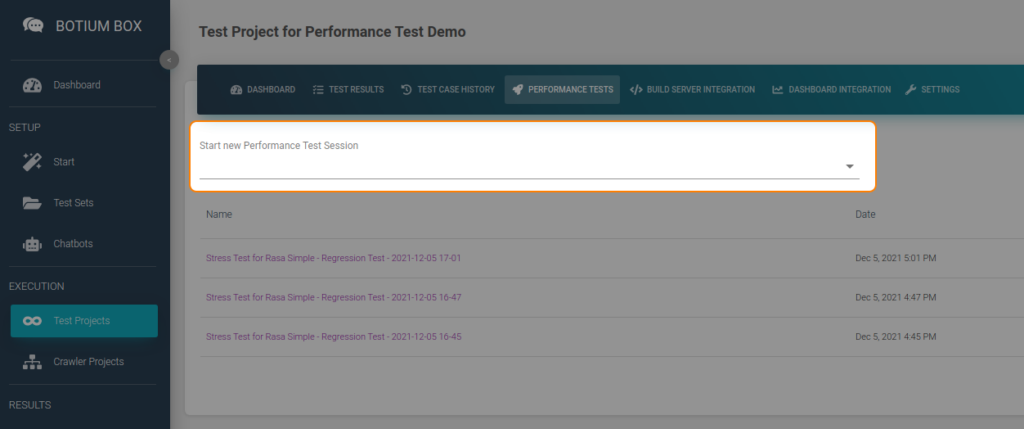

In order to start a Stress Test, we have to select the “Performance Tests” tab in our Test Project and choose Stress Test there.

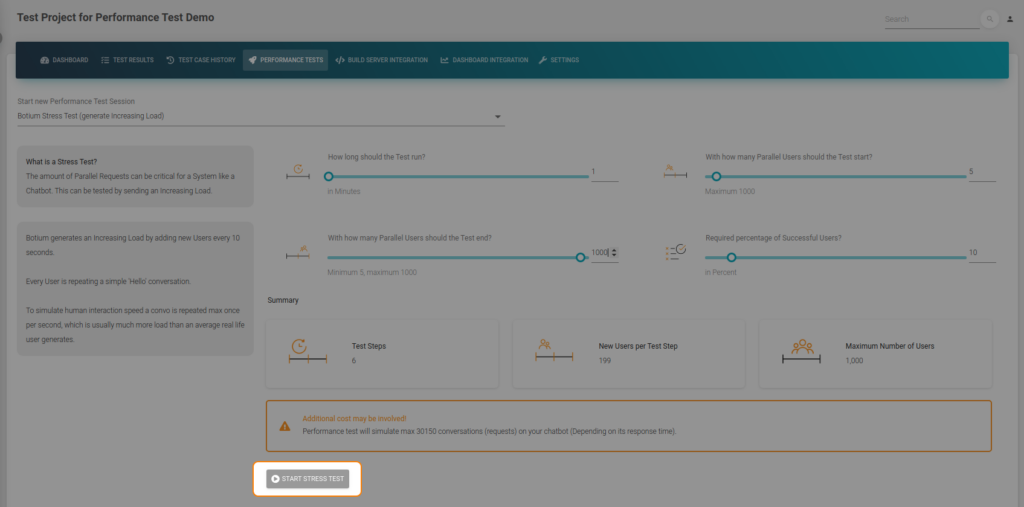

The duration can be 1 minute. In terms of parallel users, let’s use 5 for starting, and 1000 for ending. (You can put 1000 thereby editing the field directly).

“Required percentage of Successful Users?” can be 0 percent, because we don’t want to stop the test on failed responses (at the moment).

I have two goals with these settings; getting a first impression about the max parallel users the chatbot is able to serve and discovering how the chatbot breaks on extreme load. In production your chatbot could have much more load than expected, so you have to test it.

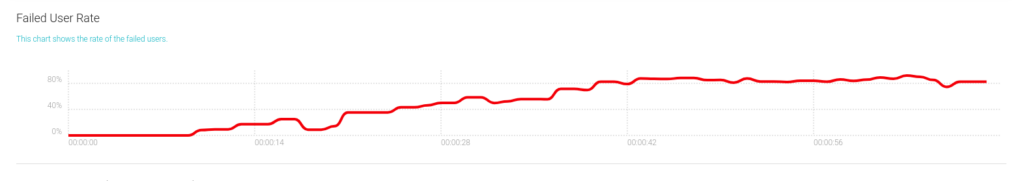

The first chart in the stress test results shows that there were errors.

We see that the bot can easily handle 5 parallel users. But on the next step, when the number of parallel users was increased to over 200, the chatbot started to fail already.

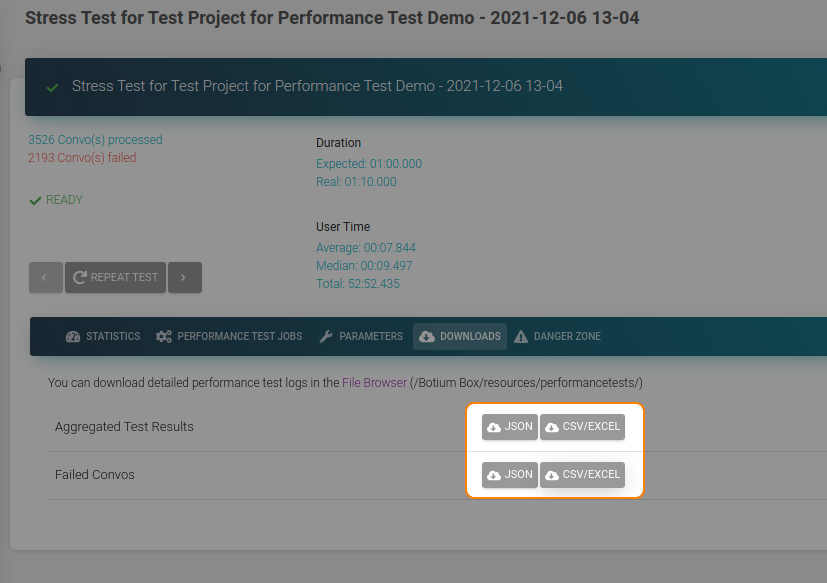

For more insights into the failures, you can download a detailed report.

This report contains all errors detected by Botium. The types of failures are:

- Timeout (Chatbot does not answer at all, or is too slow)

- Invalid response (API limit reached, server down, invalid credentials)

- Response with unexpected content (We send ‘hi’, and expect ‘Hello!’, but the chatbot answers with ‘Sorry some error detected come back later!’)

In my case there is just one kind of error in the report:

2168/Line 6: error waiting for bot — Bot did not respond within 10000ms

After 10s Botium has given up waiting for the response. The chatbot answer time was over the limit or did not answer at all. Slowing down is common on extreme loads, but receiving no answer is more critical, that we can’t ignore.

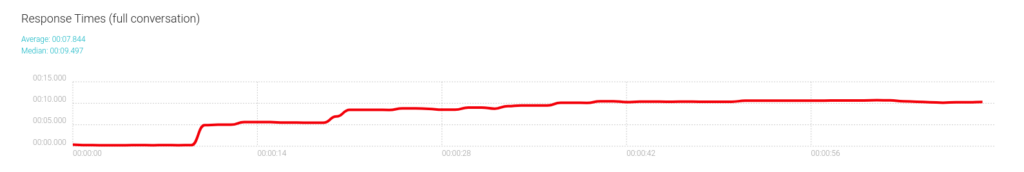

By checking the Response Times Chart in our stress test results, I notice that the chatbot is just too slow. The response time is increasing until it reaches 10s and most of the responses are failed. The next step is now to check chatbot side metrics/logs or increase the timeout in Botium. For the sake of simplicity, I believe that my impression is correct.

The first stress test proved to me that the maximum number of parallel users is somewhere at 200 and on heavy loads, the chatbot won’t break but slow down significantly.

Second Stress Test

Our first stress test had very wide parameters. I had two goals there, getting some raw results about the max parallel users and checking what happens on extreme loads. With the second stress test, I want to refine the number of max parallel users.

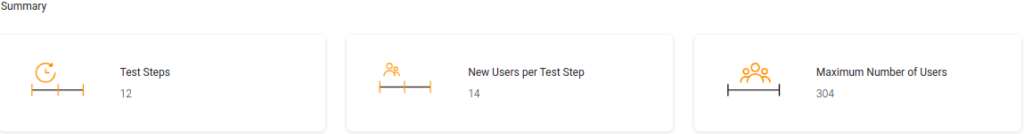

I set the test step duration to 2-sec meaning that every two seconds Botium will increase the number of parallel users. The minimum number of users to 150 and the number of maximum users to 300. Based on these parameters Botium will add every 2 seconds 14 new parallel users till it ends up with 300 after twelve steps.

We can set the ‘Required percentage of Successful Users’ to 95%. Our goal now is not to go beyond the limit of the chatbot, but about the normal usage. I already expect the test to fail because of too many timeouts. The question is when will it fail (at which number of parallel users).

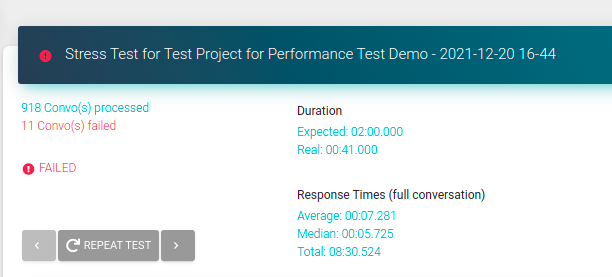

After the test is finished I see that it failed as expected:

To be sure I check the Failed Convos report. There are the same timeout errors as we had in the first test and as I expected. That’s good.

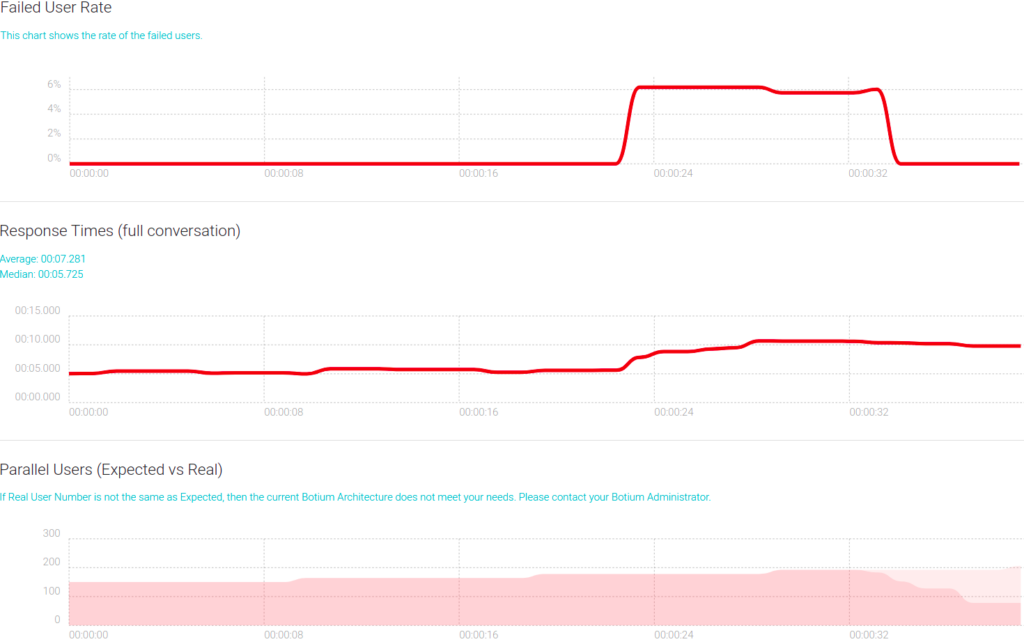

The next step is checking the charts on the stress test results:

In the first step (150 parallel users) there are no errors (first chart) and the average response time is 5s (second chart). The second step (150 + 14 parallel users) looks the same. And there I have already found the limit of my chatbot. I don’t want failures (responses above 10s) or average response times above 5s.

The third step is very interesting. The average response time is increasing. The number of users is not changing in a test step, we expect some linear response time as before. And checking the first chart we see that there were some errors.

In the last step, we can see the test tear-down phase in chart 3. The emulated users did not start any new conversations, just finished the current one.

Back to the third step. The chatbot seems to be overloaded getting more requests than it is able to process. Another finding is that in the teardown phase the response time does not recover. Probably because the response times chart is cut at 10s due to the timeout used by Botium. I suppose there is a fallback in the response times, but it is still above 10s, so we don’t see it.

If overloading is not the cause, then maybe something is sucked there (memory leak). But I’m pretty sure it’s overloading because it’s too progressive to be something else. I will prove that in my next article.

Summary

Based on the two tests done I have determined the limits of my chatbot and I know how it behaves on extreme load. Based on those KPIs I can decide for the next actions. Depending on my business requirements and the chatbot’s architecture there are many options. Fixing existing bugs, doing some horizontal/vertical scaling, or applying necessary code/architecture changes to name a few.

Next

Using just Stress Testing can be already sufficient if your chatbot is based on an external service. In more complex cases, if you own the chatbot architecture at least partially, it’s strongly recommended to dig deeper. In my next article, I will cover (memory) leaks, API limits, Ddos attacks and Performance Monitoring.